e

Tech

+ Follow this feed-

-

-

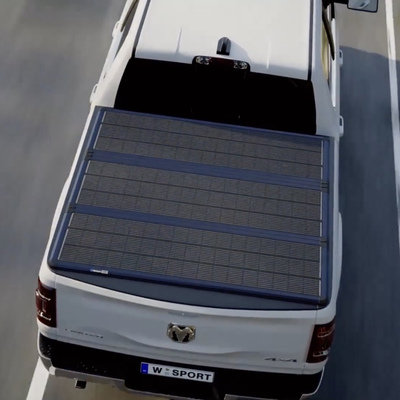

A Solar-Power-Harvesting Tonneau Cover for Pickup Trucks

Worksport's Solis and Cor Hub portable generator

July 28

8 Comments -

-

HTX Studio Explores the Design of Smart, Roving Trash Cans

This is a fantastic example of building on the work of others, then 10x'ing...

July 25

-

-

-

The Perfect Non-Invasive Mold Detector for Homes: A Specially Trained Dog

You can't pet a wrecking bar

July 11

-

-

The Axion is Closer to the Flying Car We All Imagined

FusionFlight's jet-powered, diesel-fueled solution to personal flying craft

July 9

2 Comments -

A Smart Design for a Robotic Tennis Partner

The Acemate Tennis Robot is like a pitching machine on wheels

July 1

5 Favorites -

China Moved an Entire Historical Building Complex Using Walking Robots

As we saw earlier this month, Samsung has developed flat robots used to park...

July 1

2 Comments -

-

A Wireless, Color E-Paper Sign that Can Go 200 Days Without Recharging

Samsung's EMDX

June 17

1 Comment -

Combustion Inc's Braun-Like Barbecue Accessories

For some barbecuing is an art, for others, a science. For that latter camp,...

June 17

-

The Splay Max: A Folding Portable 35" Monitor

It's been four years since we looked at the Arovia Splay, a portable monitor...

June 11

-

U.C. Berkeley's Tiny Pogo Robot has a Unique Locomotion Style

SALTO has only one leg, and does more with less

June 10

-

World's First Self-Balancing Exoskeleton Allows People to Walk Again

Wandercraft's Eve is undergoing trials in America

June 9

-

Hyundai's Incredible WIA Autonomous Robot Parking Valets

They're fast and work in pairs

June 6

1 Comment -

Caltech Develops Drone That Smoothly Transitions from Flight to Four-Wheeling

This is what we all thought of when we pictured flying cars

June 2

-

-

Growl: An AR Punching Bag for Training and Gaming

This Growl object is a wall-mounted punching bag featuring a built-in projection system. You'd...

May 22

-

The Loki Cleaning Robot, Here to End Janitors

To the list of jobs that will not exist in the future, we must...

May 22

-

A Cooler with a Built-In Air Conditioner

Because heaven forbid you break a sweat outside

May 22

1 Comment -

Good or Bad? This System Records Entire Sporting Matches, But Highlights Just Your Child

A case where parents (probably) want their child's movements tracked by software

May 21

4 Comments -

-

This Triple Boost Pro Adds Three Unfolding Screens to your Laptop

A display manufacturer called Aura has developed this Triple Boost 14" Pro, which adds...

May 13

-

-

Videos of Humanoid Robots Going Dangerously Berserk

This is why we need kill switches

May 7

4 Comments -

From Switzerland, a Wheeled Dog-Like Robot for Carrying Cargo

The RIVR LEVA, spun off from ETH Zurich

May 5

-

Spacetop for Windows: AR Glasses that Provide a Virtual, Private 100" Display Space

Spacetop, which we covered in 2023, was supposed to be a laptop with no...

April 28

2 Comments -

Augmented Carpentry: Measure Never, Cut Once

You look through a screen, the computer tells you where to cut the wood

April 16

2 Comments -

Low-Cost Robot Hands Made of Measuring Tape

The material is surprisingly well-suited for delicate operations

April 15

K

{Welcome

Create a Core77 Account

Already have an account? Sign In

By creating a Core77 account you confirm that you accept the Terms of Use

K

Reset Password

Please enter your email and we will send an email to reset your password.